Published on 01/07/2020

It is hard to find a free meeting slot with Luca Finelli these days. A physicist by training, he hatched the concept for the control tower for clinical trials known as SENSE. He is now wading knee-deep in work to accelerate the digitization of Novartis and spur the use of artificial intelligence (AI) tools such as machine learning across the company.

Among the many current engagements, Luca Finelli and his Insights Strategy and Design (ISD) team are supporting Novartis Technical Operations in their effort to digitize their global operations, which span more than 60 manufacturing sites. They are also supporting Global Drug Development to bring order and predictive analytics to the financial planning of the hundreds of clinical trials the division conducts every year.

Much like with SENSE, which allows Novartis to maintain an overview of its more than 500 ongoing clinical studies in one place and analyze their progress in real time, the entrepreneurial ISD team, which includes Davide Franco, Gernot Weber, Robert McGregor and David Heard, is aiming to transform tedious and manual procedures into fast and predictive processes.

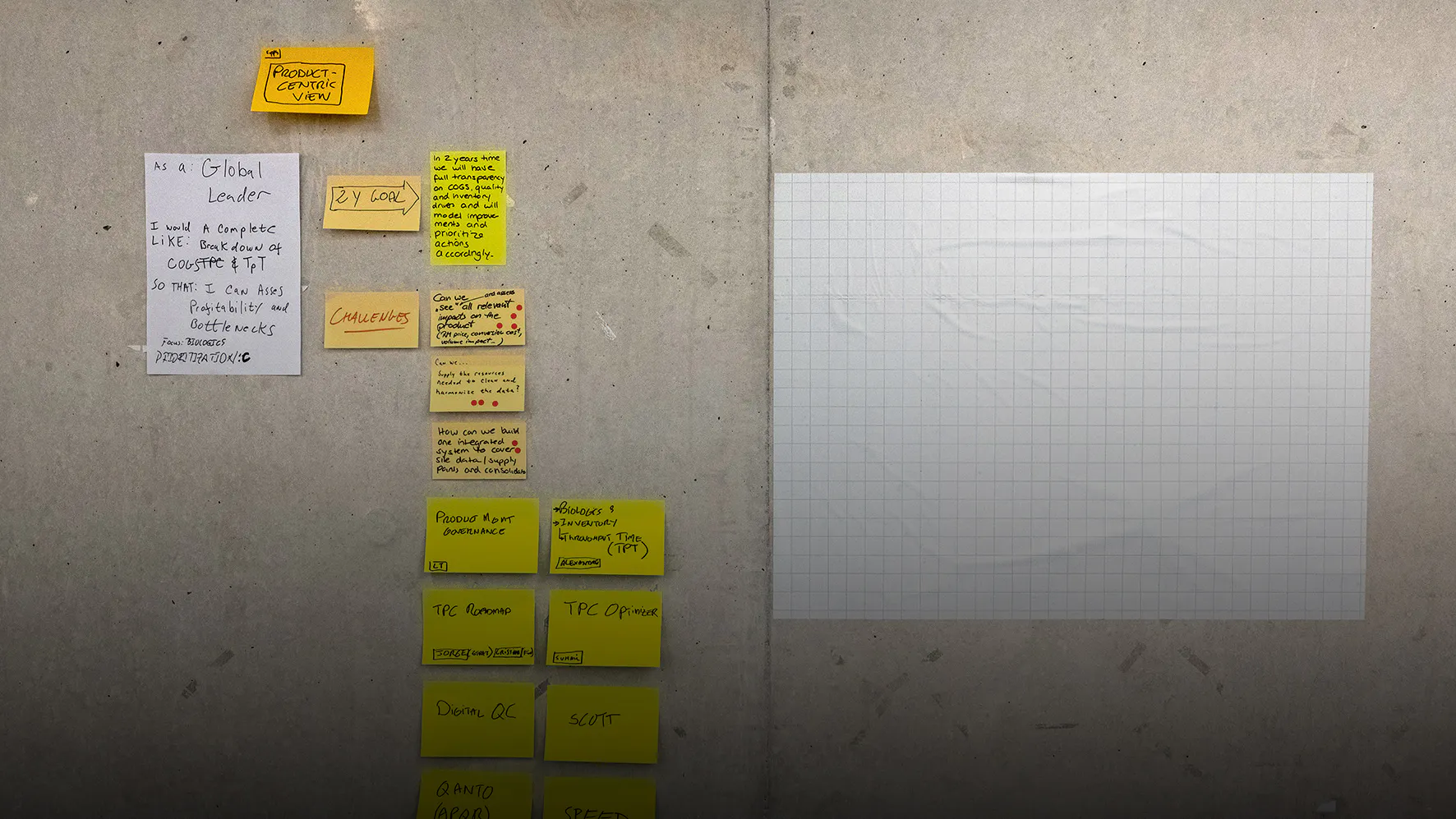

As part of their collaboration with Novartis Technical Operations, they are supporting the manufacturing division to increase operations visibility and efficiency by using real-time data to aid sites with daily operations, understand site-level performance, assess risks and make predictions to decide which actions are best to take. The system should help build transparency regarding products, inventories and the overall supply chain.

In a similar way, the team is also setting up an AI solution called Dynamo to improve investment planning for clinical trials. Instead of having teams guesstimate future trial expenses and have the costs corrected year after year by colleagues from finance, the ISD team in partnership with GDD Finance and the GDO function is creating algorithms that are able to learn from past clinical expense and operational data and predict future trial costs.

“Dynamo is our latest solution as part of the Nerve Live program. What we want to achieve with Dynamo is to take the actual spend data of the past years, have algorithms learn how these patterns go, and then, when it comes to a new trial, predict how the spend will develop and then compare those predictions to help associates make better budget plans,” Luca Finelli says.

Augmented intelligence

The goal, Finelli stresses, is not to replace associates with supercomputers, but rather to support them in their everyday tasks and eliminate manual, cumbersome and error-prone processes. This is also the reason Luca Finelli dislikes the overuse of the term artificial intelligence. For him, the term carries misleading associations of an all-powerful digital entity that may soon replace traditional workers and – like in the dystopian visions of thinkers such as Yuval Harari – take full control over our lives.

“We are not trying to replace humans with artificial intelligence,” Finelli explains. “All these tools that we are building are in fact helping humans to do their jobs better, so they have the information they need at their fingertips when they are planning and executing their daily business.”

This, he says, is the concept of augmented intelligence, which his group is trying to popularize across Novartis. “We are using the power of data and data science and artificial intelligence to help people’s own intelligence, augmenting it to be more informed and help them make better decisions.”

Huge potential ...

Finelli is convinced that the new AI systems will help people find better solutions faster and enhance efficiency across the board.

“With the rapid progress in the realm of AI, we are experiencing a phase in which knowledge is created in a different way than in the past,” Luca Finelli explains. What in the past took generations of scientists to achieve in order to advance human knowledge can now be done almost in the blink of an eye.

“When powerful machine learning and deep-learning algorithms tackle a problem, they can find new and sometimes very surprising solutions that can lead to knowledge gains and performance improvements as high as 20 percent.”

When DeepMind’s AI-driven software beat human players in the game of Go in 2016 – a feat that was deemed nearly impossible a decade ago – it not only won by a high margin. The system was also able to develop game strategies that took everyone by surprise. Since then, AI systems have repeatedly shown themselves capable of finding solutions that beat human ingenuity.

... and risks

But AI has also shown that it can go completely haywire and come up with wrong solutions, especially when the data quality is poor or algorithms are infested with inherent biases that developers overlooked.

Critics such as Jonathan Zittrain, a Harvard Law School professor, recently rang the alarm bell when he compared AI to asbestos – the fire-insulating material used in construction until the 1980s when researchers realized that it was causing cancer.

“I think of machine learning kind of as asbestos,” he was quoted by Stat during the 2019 Precision Medicine conference in Boston. “It turns out that it’s all over the place, even though at no point did you explicitly install it, and it has possibly some latent bad effects that you might regret later, after it’s already too hard to get it all out.”

Zittrain was especially concerned that new digital systems could come to wrong conclusions when diagnosing patients and suggesting wrong therapies.

Even if the ISD team is focusing on operational rather than clinical and patient data, the group is fully aware that AI tools such as machine learning and the neuronal networks of deep learning, which parse the data autonomously and are difficult to understand even for experts, have their own inherent risks.

“If the data that we are feeding to the artificial intelligence for learning has gaps or has errors, then the algorithms will learn wrongly and miss the stuff that is in the gaps. The outcome will definitely be imperfect,” Finelli says.

To avoid this, Luca Finelli and his team are creating Dr. Agent (short for Data Readiness Agent), “an application which goes through all the data stored in our Nerve Live platform and constantly checks for inconsistencies and probes the data against certain rules in order to ensure maximum quality and compliance within our data lake.”

Finelli also wants to avoid errors that are not immediately visible. “Every time you select a certain dataset, or you program a specific algorithm, these may have a number of limitations or constraints which are hidden in the data generation process or algorithm architecture itself. This is known as ‘selection bias’ and means that the data is not representative of the population intended to be analyzed and will convey only certain aspects of the underlying objects under investigation.”

Responsibility and ethics

The ISD team, which is now in the design phase of Dr. Agent, wants the system to be aware of these inherent limitations and bias too.

“We have a big responsibility to constantly check our data,” Finelli declares. “Both data scientists and business people have to take this seriously. We have to test our data and algorithms very carefully to assure that the outcome is not biased and that we are not being led to wrong conclusions.”

As AI-driven systems are becoming ever more complex and are about to be a key source of decision-making, ethics is increasingly important too. “We are also thinking hard about ethical issues. We want to make sure we are aware of the potential inconsistencies and bias that may be the result of how we select and structure our data. Without it, we may not get the full potential of artificial intelligence and miss a chance that we may not get again so easily.”

Failing to live up to its potential may not only lead to a decline in public interest. It may also deter investors and thereby bury the high hopes people have that AI could turn into a key performance driver in the years to come. Such concerns are not far-fetched. When AI researchers in the 1970s promoted the idea that they would soon be able to engineer computers with human-level intelligence, they attracted global investor interest. But when they failed to live up to expectations, the market turned sour. What followed was a period of low investment and limited research – known as AI winter.

If AI generates wrong results, another winter might follow, Finelli warns. But systems such as Dr. Agent, he says, are one way to keep such risks at bay.